Leduc Holdem

Released:

Karla Leduc Poker, 5 card draw free online, joc slots cu fructe, genting casino feng shui Win big prizes only available on Aboutslot. Check out our current giveaway. Reinforcement Learning / AI Bots in Card (Poker) Games - Blackjack, Leduc, Texas, DouDizhu, Mahjong, UNO. JonathanLehner/rlcard.

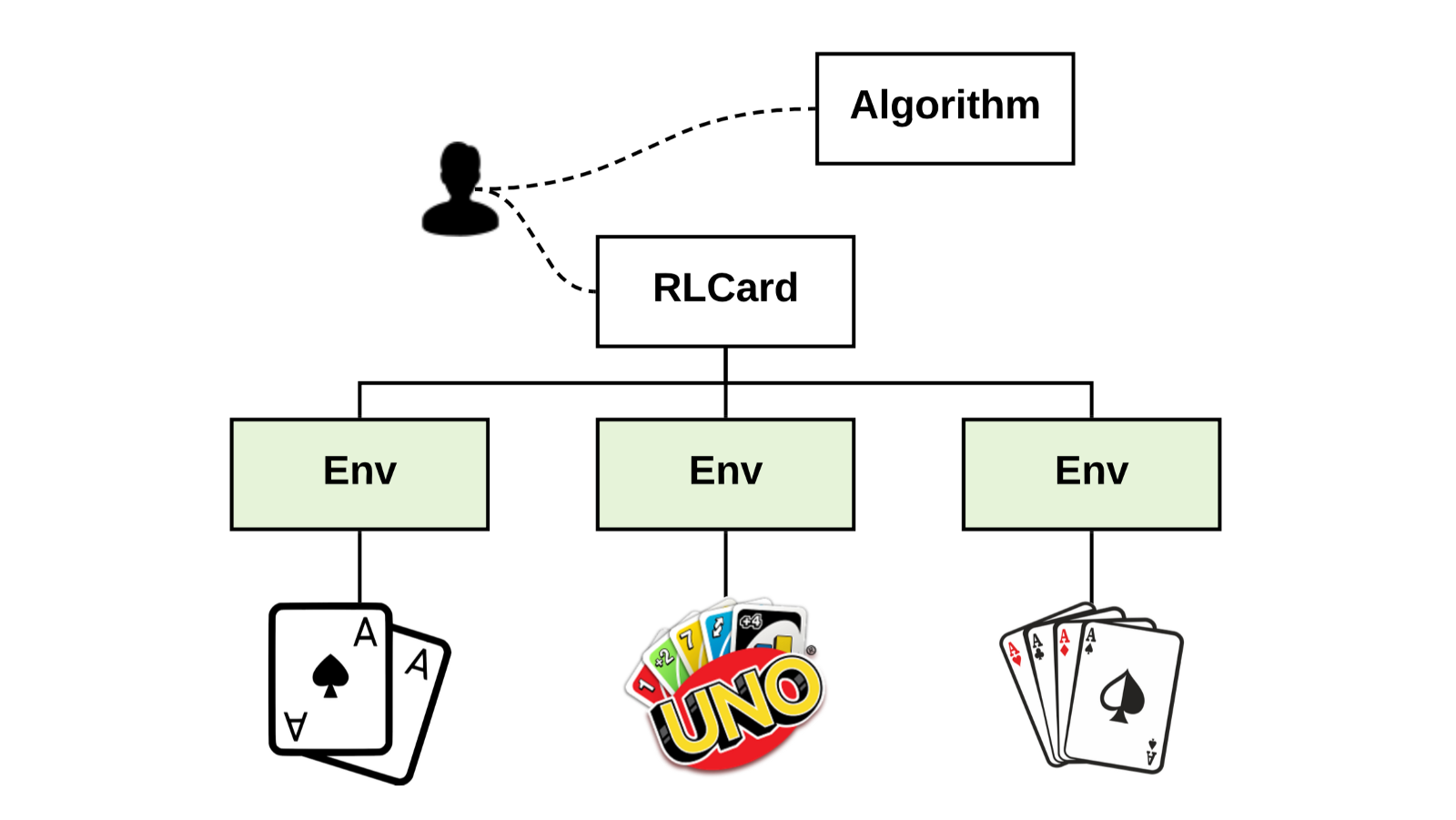

A Toolkit for Reinforcement Learning in Card Games

Specifically, the rewards of betting games (Leduc Hold’em, Limit Texas Hold’em, No-limit Texas Holdem) are defined as the average winning big blinds per hand. The rewards of the other games are obtained directly from the winning rates. In Dou Dizhu, players are in different roles (landlord and peasants). We fix the role of the agent as the.

Project description

RLCard is a toolkit for Reinforcement Learning (RL) in card games. It supports multiple card environments with easy-to-use interfaces. The goal of RLCard is to bridge reinforcement learning and imperfect information games. RLCard is developed by DATA Lab at Texas A&M University and community contributors.

- Training CFR on Leduc Hold'em To show how we can use step and stepback to traverse the game tree, we provide an example of solving Leduc Hold'em with CFR: ↳ 11 cells hidden.

- Leduc Hold’em is a smaller version of Limit Texas Hold’em (first introduced in Bayes’ Bluff: Opponent Modeling in Poker). The deck consists only two pairs of King, Queen and Jack, six cards in total. Each game is fixed with two players, two rounds, two-bet maximum and raise amounts of 2 and 4 in the first and second round.

- Official Website: http://www.rlcard.org

- Tutorial in Jupyter Notebook: https://github.com/datamllab/rlcard-tutorial

- Paper: https://arxiv.org/abs/1910.04376

- GUI: RLCard-Showdown

- Resources: Awesome-Game-AI

News:

- We have released RLCard-Showdown, GUI demo for RLCard. Please check out here!

- Jupyter Notebook tutorial available! We add some examples in R to call Python interfaces of RLCard with reticulate. See here

- Thanks for the contribution of @Clarit7 for supporting different number of players in Blackjack. We call for contributions for gradually making the games more configurable. See here for more details.

- Thanks for the contribution of @Clarit7 for the Blackjack and Limit Hold'em human interface.

- Now RLCard supports environment local seeding and multiprocessing. Thanks for the testing scripts provided by @weepingwillowben.

- Human interface of NoLimit Holdem available. The action space of NoLimit Holdem has been abstracted. Thanks for the contribution of @AdrianP-.

- New game Gin Rummy and human GUI available. Thanks for the contribution of @billh0420.

- PyTorch implementation available. Thanks for the contribution of @mjudell.

Cite this work

If you find this repo useful, you may cite:

Installation

Make sure that you have Python 3.5+ and pip installed. We recommend installing the latest version of rlcard with pip:

Alternatively, you can install the latest stable version with:

The default installation will only include the card environments. To use Tensorflow implementation of the example algorithms, install the supported verison of Tensorflow with:

To try PyTorch implementations, please run:

If you meet any problems when installing PyTorch with the command above, you may follow the instructions on PyTorch official website to manually install PyTorch.

We also provide conda installation method:

Conda installation only provides the card environments, you need to manually install Tensorflow or Pytorch on your demands.

Examples

Please refer to examples/. A short example is as below.

We also recommend the following toy examples in Python.

R examples can be found here.

Demo

Run examples/leduc_holdem_human.py to play with the pre-trained Leduc Hold'em model. Leduc Hold'em is a simplified version of Texas Hold'em. Rules can be found here.

We also provide a GUI for easy debugging. Please check here. Some demos:

Available Environments

Leduc Hold'em Game

We provide a complexity estimation for the games on several aspects. InfoSet Number: the number of information sets; InfoSet Size: the average number of states in a single information set; Action Size: the size of the action space. Name: the name that should be passed to rlcard.make to create the game environment. We also provide the link to the documentation and the random example.

| Game | InfoSet Number | InfoSet Size | Action Size | Name | Usage |

|---|---|---|---|---|---|

| Blackjack (wiki, baike) | 10^3 | 10^1 | 10^0 | blackjack | doc, example |

| Leduc Hold’em (paper) | 10^2 | 10^2 | 10^0 | leduc-holdem | doc, example |

| Limit Texas Hold'em (wiki, baike) | 10^14 | 10^3 | 10^0 | limit-holdem | doc, example |

| Dou Dizhu (wiki, baike) | 10^53 ~ 10^83 | 10^23 | 10^4 | doudizhu | doc, example |

| Simple Dou Dizhu (wiki, baike) | - | - | - | simple-doudizhu | doc, example |

| Mahjong (wiki, baike) | 10^121 | 10^48 | 10^2 | mahjong | doc, example |

| No-limit Texas Hold'em (wiki, baike) | 10^162 | 10^3 | 10^4 | no-limit-holdem | doc, example |

| UNO (wiki, baike) | 10^163 | 10^10 | 10^1 | uno | doc, example |

| Gin Rummy (wiki, baike) | 10^52 | - | - | gin-rummy | doc, example |

API Cheat Sheet

How to create an environment

You can use the the following interface to make an environment. You may optionally specify some configurations with a dictionary.

- env = rlcard.make(env_id, config={}): Make an environment.

env_idis a string of a environment;configis a dictionary that specifies some environment configurations, which are as follows.seed: DefaultNone. Set a environment local random seed for reproducing the results.env_num: Default1. It specifies how many environments running in parallel. If the number is larger than 1, then the tasks will be assigned to multiple processes for acceleration.allow_step_back: DefualtFalse.Trueif allowingstep_backfunction to traverse backward in the tree.allow_raw_data: DefaultFalse.Trueif allowing raw data in thestate.single_agent_mode: DefaultFalse.Trueif using single agent mode, i.e., Gym style interface with other players as pretrained/rule models.active_player: Defualt0. Ifsingle_agent_modeisTrue,active_playerwill specify operating on which player in single agent mode.record_action: DefaultFalse. IfTrue, a field ofaction_recordwill be in thestateto record the historical actions. This may be used for human-agent play.- Game specific configurations: These fields start with

game_. Currently, we only supportgame_player_numin Blackjack.

Once the environemnt is made, we can access some information of the game.

- env.action_num: The number of actions.

- env.player_num: The number of players.

- env.state_space: Ther state space of the observations.

- env.timestep: The number of timesteps stepped by the environment.

What is state in RLCard

State is a Python dictionary. It will always have observation state['obs'] and legal actions state['legal_actions']. If allow_raw_data is True, state will also have raw observation state['raw_obs'] and raw legal actions state['raw_legal_actions'].

Basic interfaces

The following interfaces provide a basic usage. It is easy to use but it has assumtions on the agent. The agent must follow agent template.

- env.set_agents(agents):

agentsis a list ofAgentobject. The length of the list should be equal to the number of the players in the game. - env.run(is_training=False): Run a complete game and return trajectories and payoffs. The function can be used after the

set_agentsis called. Ifis_trainingisTrue, it will usestepfunction in the agent to play the game. Ifis_trainingisFalse,eval_stepwill be called instead.

Advanced interfaces

For advanced usage, the following interfaces allow flexible operations on the game tree. These interfaces do not make any assumtions on the agent.

- env.reset(): Initialize a game. Return the state and the first player ID.

- env.step(action, raw_action=False): Take one step in the environment.

actioncan be raw action or integer;raw_actionshould beTrueif the action is raw action (string). - env.step_back(): Available only when

allow_step_backisTrue. Take one step backward. This can be used for algorithms that operate on the game tree, such as CFR. - env.is_over(): Return

Trueif the current game is over. Otherewise, returnFalse. - env.get_player_id(): Return the Player ID of the current player.

- env.get_state(player_id): Return the state that corresponds to

player_id. - env.get_payoffs(): In the end of the game, return a list of payoffs for all the players.

- env.get_perfect_information(): (Currently only support some of the games) Obtain the perfect information at the current state.

Running with multiple processes

RLCard now supports acceleration with multiple processes. Simply change env_num when making the environment to indicate how many processes would be used. Currenly we only support run() function with multiple processes. An example is DQN on blackjack

Library Structure

The purposes of the main modules are listed as below:

- /examples: Examples of using RLCard.

- /docs: Documentation of RLCard.

- /tests: Testing scripts for RLCard.

- /rlcard/agents: Reinforcement learning algorithms and human agents.

- /rlcard/envs: Environment wrappers (state representation, action encoding etc.)

- /rlcard/games: Various game engines.

- /rlcard/models: Model zoo including pre-trained models and rule models.

Evaluation

The perfomance is measured by winning rates through tournaments. Example outputs are as follows:

For your information, there is a nice online evaluation platform pokerwars that could be connected with RLCard with some modifications.

More Documents

For more documentation, please refer to the Documents for general introductions. API documents are available at our website.

Contributing

Contribution to this project is greatly appreciated! Please create an issue for feedbacks/bugs. If you want to contribute codes, please refer to Contributing Guide.

Acknowledgements

We would like to thank JJ World Network Technology Co.,LTD for the generous support and all the contributions from the community contributors.

Release historyRelease notifications RSS feed

0.2.8

0.2.7

0.2.6

0.2.5

0.2.4

Leduc Hold'em Poker

0.2.3

0.2.1

0.2.0

0.1.17

0.1.15

0.1.14

0.1.13

0.1.12

0.1.11

0.1.10

0.1.9

0.1.8

0.1.7

0.1.6

0.1.5

0.1.4

0.1.3

0.1.2

0.1.1

0.1

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

| Filename, size | File type | Python version | Upload date | Hashes |

|---|---|---|---|---|

| Filename, size rlcard-0.2.8.tar.gz (6.7 MB) | File type Source | Python version None | Upload date | Hashes |

Hashes for rlcard-0.2.8.tar.gz

| Algorithm | Hash digest |

|---|---|

| SHA256 | 48677a1bf1f5e925c3995de0890db7d111cdb11626a6f3e2e1ddbbe750c9f332 |

| MD5 | bad0f3be0127c61c047fb1a1f6da59b7 |

| BLAKE2-256 | 1df79c4f68698fb70fdc4fced67d1f7d2e7250be5fdc51c9b3b6779d53806307 |